Proven Savings

at Scale

Every number below is verified at the server plug using a state-of-the-art power analyser — not estimated, not simulated.

llama.cpp

AI Inference Is Energy-Hungry

On a cutting-edge workstation GPU, each generated token carries a significant energy cost. With millions of tokens processed daily, that adds up fast. And without active optimisation, both CPU and GPU hardware are left running at full speed regardless of actual workload demand.

* All Watt-second readings measured at the server wall-socket. Test system: NVIDIA RTX Pro 6000 Blackwell (96 GB VRAM), 24-core workstation, 128 GB RAM, 2,050 W PSU.

vert-suite

A production-ready software platform for autonomous GPU/CPU efficiency orchestration — inference engine agnostic, with no hardware changes, no application modifications, and no manual tuning required.

Autonomous CPU/GPU Optimisation

Autonomous bare-metal GPU and CPU management for AI/LLM workloads. Empirically validated energy savings of over 30% per token — with no changes to your application or inference stack.

Continuous SLA Spectrum

Specify your maximum acceptable throughput reduction. vert-suite automatically scans the GPU/CPU operating envelope and locks in the profile delivering the deepest energy savings within your constraint.

Green & Cost-Efficient

Reduce OpEx, extend platform lifespan, and lower your infrastructure's carbon footprint — without replacing or upgrading hardware.

Deep Monitoring with eBPF

Leverage eBPF for deep system observability with minimal operational disruption — complemented by physical out-of-band telemetry for independent power validation.

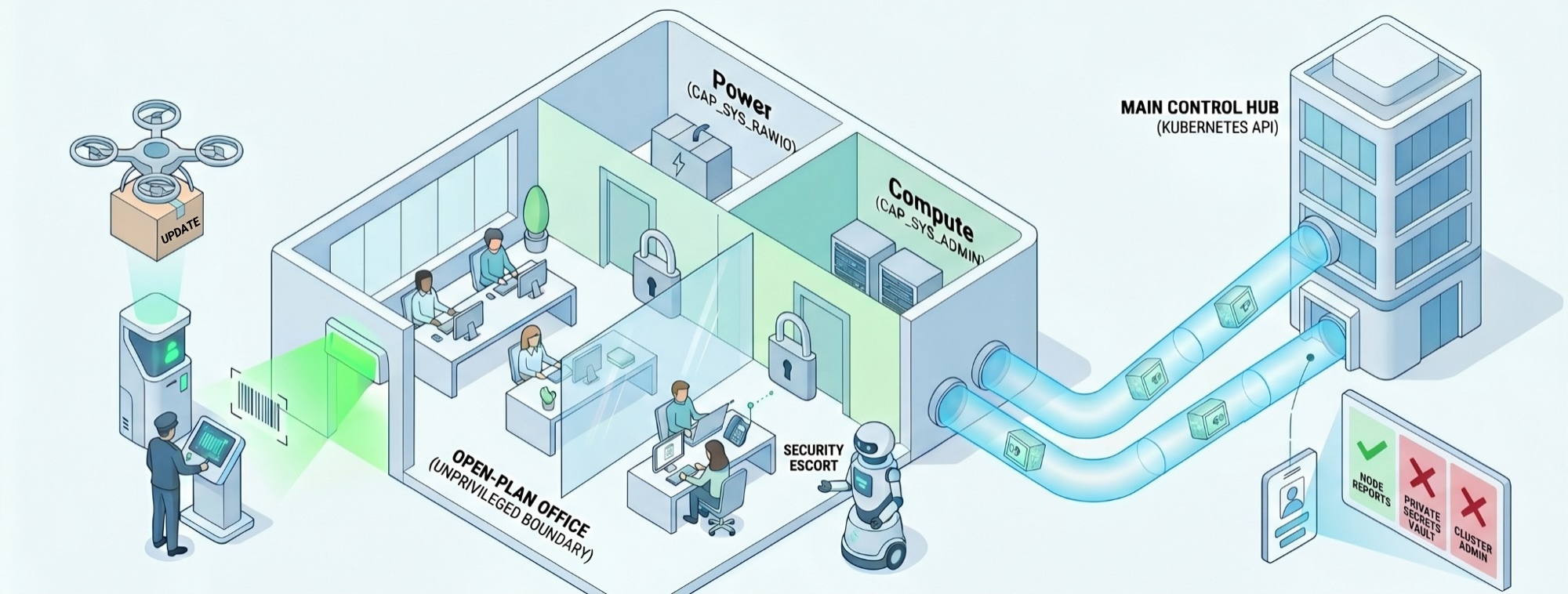

Zero-Trust, Kubernetes-Native

Least-privilege agents, just-in-time capabilities, and mTLS-secured control-plane traffic. Transparent telemetry with out-of-band validation for full auditability.

Seamless Integration

Inference engine agnostic — validated on vLLM and llama.cpp, and compatible with other leading engines. One inclusive library, cross-platform, cross-Linux/K8S distribution, deployable in minutes with no operators, scheduler extensions, or YAML modifications.

The industry standard is to hand over the keys to the kingdom — permanent, unconditional root access to your kernel, drivers, and hardware. You shouldn't have to compromise your cluster's security just to run your compute efficiently.

Zero-Compromise Security Architecture

vert-suite is built on a strict principle of minimal authority. Instead of deploying a privileged monolith, the architecture is physically split — a standard unprivileged worker that requests just-in-time access only when needed, and only for the exact duration required.

Public key at a terminal — signature verification before any agent is admitted.

Main agent operates as a standard worker within an unprivileged boundary — CAP_SYS_RAWIO and CAP_SYS_ADMIN never granted permanently.

A Security Escort briefly unlocks the required resource, completes the exact task, and immediately relocks — no standing privileges.

All control-plane traffic is encrypted via mTLS tunnels — no plaintext communication between components.

Digital identity verification prevents any component from operating outside its authorised scope.

Install vert-suite

No YAML changes, no Kubernetes operators, no code modifications to your inference stack.

Set Your SLA

Tell vert-suite your maximum acceptable throughput reduction. It autonomously scans the full GPU/CPU operating envelope.

Savings Start Immediately

Real-time CPU/GPU co-optimisation locks in the deepest energy savings within your constraint.

You Set the Constraint.

We Find the Optimum.

vert-suite does not offer a fixed menu of profiles. It offers a continuous spectrum. Simply tell the software the maximum throughput reduction you can accept — say, no more than 10%, 15%, or 20% — and it automatically identifies the optimal GPU/CPU operational mode to deliver the deepest possible energy savings within that constraint.

Three example datapoints — any point on the spectrum is achievable

Minimal Impact

The software identifies the energy-saving profile that keeps throughput within 10% of baseline. Deep savings with near-transparent operational impact.

Sweet Spot

A marginal additional throughput budget unlocks significantly deeper energy reductions. Consistently the highest-value point on the spectrum across all tested models.

Maximum Efficiency

Accepts a larger throughput trade-off to push energy savings to their maximum. Ideal for cost-capped batch workloads where latency is not time-critical.

Comprehensive Model Benchmarks

Five leading open-weight models. Three SLA profiles. All energy independently verified at the server wall-socket using a state-of-the-art power analyser — against unoptimised SotA baselines.

* All Watt-second readings measured at the server wall-socket on a single NVIDIA RTX Pro 6000 Blackwell GPU server.

Optimising Autonomous AI Agents

Continuous, zero-intervention AI agent loops create a sustained, demanding inference load. We validated vert-suite in exactly this scenario — using Claude Code acting as an autonomous coding agent running non-stop research iterations on a dedicated GPU server.

What 30% energy savings means in practice

Average UK commercial electricity rate: 25.5p/kWh · 24/7 continuous operation · Savings scale linearly with fleet size